We've handpicked the top five stories and updates from the second quarter of 2023 that you need to know about.

Meta hit with record €1.2BN fine for mishandling personal data

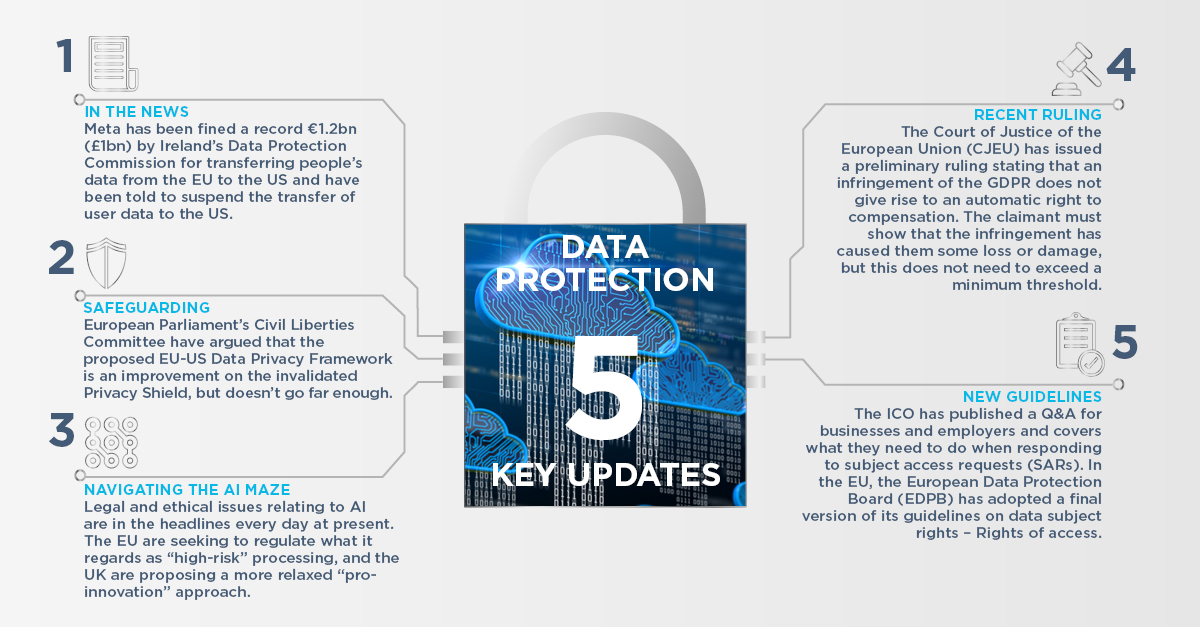

Meta, the company that owns Facebook, has been fined a record €1.2bn (£1bn) by Ireland's Data Protection Commission (DPC) for transferring people's data from the EU to the US on the basis of the EU's standard contractual clauses (SCCs). The company has also been told to suspend the transfer of user data to the US.

Meta has been given five months to implement the suspension of Facebook data transfers and six months to stop the unlawful processing in the US of personal data already transferred across the Atlantic.

The DPC's fine relates to a legal challenge over concerns that European users' data is not sufficiently protected from access by US intelligence agencies when transferred to the US.

Meta said that it had been "singled out" by the DPC despite other businesses using the same data transfer process and will be appealing the "unjustified and unnecessary" ruling.

The decision does not affect Facebook in the UK, but the Information Commissioner's Office (ICO) has said it will "review the decision in due course".

Watch AG's webinar in which some of our data protection experts provide their insights into the decision and its impact. You can also read our special bulletin which provides more information here.

So what?

The decision does not necessarily mean that all personal data transfers to the USA must cease, but the US government's surveillance powers mean that organisations must tread carefully. As outlined in the next item, the European Commission is in the process of adopting the EU-US Data Privacy Framework, which is intended to provide a new mechanism for transfers to the US, but this is facing a number of obstacles.

In the meantime, organisations who wish to transfer personal data to the US should conduct risk assessments on a case-by-case basis to decide whether each transfer can go ahead and, if so, document the transfer, using the SCCs or (if appropriate) Binding Corporate Rules.

MEPS argue EU-US data privacy framework does not provide sufficient safeguards

In a resolution adopted in April 2023 by the European Parliament's Civil Liberties Committee, MEPs have argued that the proposed EU-US Data Privacy Framework is an improvement on the invalidated Privacy Shield, but still does not provide sufficient safeguards. MEPs noted that the framework allows for bulk collection of personal data in certain instances, has no independent prior authorisation term for bulk data collection and does not provide a clear outline on data retention. The framework is intended to provide a safeguard mechanism for the transfer of personal data from the EU to the US, replacing the Privacy Shield, which was invalidated in the Schrems II decision in 2020.

Under the new framework, a Data Protection Review Court would be set up, aimed at providing redress to EU data subjects. The decisions of this court would be kept confidential, which the Committee considers a violation of individuals' rights to access and rectify data about them. The court would not be truly independent, as the US President could dismiss judges and overrule their decisions.

MEPs have said that EU citizens need legal certainty and a future-proof data transfer regime, but this is not guaranteed under the new framework. Privacy activist Max Schrems has already indicated that he will challenge the framework in Court, meaning that the Court of Justice of the European Union (CJEU) may invalidate the current proposal as it did previous data transfer frameworks. Didier Reynders, the European Commissioner for Justice, has said that the new framework can realistically be in place by this summer, as necessary steps on the US side, including establishment of a complaints court, are ‘well advanced', and that he believes the framework would survive a legal challenge.

So what?

As above, organisations need to proceed with caution when transferring personal data to the USA, but this does not mean that all such transfers must cease. Instead, organisations should take a risk-based approach and conduct case-by-case impact assessments to decide whether their proposed transfers can go ahead.

Artificial intelligence developments

A member of the Italian Data Protection Authority has warned that OpenAI, the owner of ChatGPT, is facing a finding of a major breach of the EU's privacy rules by its artificial intelligence chatbot.

In March 2023, the Italian DPA blocked the use of ChatGPT in Italy due to privacy concerns relating to the tool and issued a list of requirements for Open AI to meet in order to have the block lifted. ChatGPT was reinstated in the country on 28 April 2023 after the DPA received a letter from OpenAI outlining the measures it had taken to meet these requirements.

In other developments, the ICO has shown support for the UK Government's White Paper on Artificial Intelligence regulation, saying that it aligns with their own strategic ambitions set out in ICO25, and the AI Act, a proposed European law on artificial intelligence, is a step closer to becoming law following a crunch vote by EU MEPs in early May 2023. The draft Act now faces a plenary vote in the European Parliament in June, followed by trilogue talks from representatives of the European Parliament, the Council of the European Union and the European Commission before it becomes law. On 31 May the EU digital chief announced a joint EU and US proposal to develop an emergency code of conduct ‘within weeks’ that countries around the world could sign up to ahead of establishing longer-term legislation.

So what?

While legal and ethical issues relating to AI appear to be in the headlines every day at present, the EU and UK appear to be taking diverging approaches, with the EU seeking to regulate what it regards as "high-risk" processing, and the UK proposing a more relaxed "pro-innovation" approach.

While we wait for the EU and UK laws to progress, the ICO has published guidance on the data protection aspects of AI, stressing the importance of applying the existing law, for example conducting data protection impact assessments (DPIAs), being transparent with users about what data you are collecting and how you are using it, and being alert to the risks of bias and discrimination.

CJEU Rules compensation for non-material not automatic under GDPR

The Court of Justice of the European Union (CJEU) has issued a preliminary ruling in the case C-300/21, UI v Österreichische Post AG, stating that a mere infringement of the GDPR (under Article 82) does not give rise to an automatic right to compensation. The claimant must show that the infringement has caused them some loss or damage, but this does not need to exceed a minimum threshold.

So what?

CJEU rulings are binding on EU courts. While the ruling is not binding on the UK courts, they may have regard to it. The English courts have shown reluctance to award compensation for breaches of data protection law in all but the most serious cases, and damages awarded have been low. On that basis, organisations may consider that compensation claims are relatively low risk, but the risk of fines and reputational damage remains significant.

EDPF and ICO publish further guidance on subject access requests

The ICO has published a new Q&A for businesses and employers on responding to subject access requests (SARs). These Q&A's provide guidance on some of the common issues involved in responding to SARs, including more complex points such as dealing with emails the data subject is copied into, social media searches and CCTV footage in which other people appear, as well as employment-specific points such as requests from workers going through a tribunal or grievance process.

Between April 2022 and March 2023, the ICO received over 15,000 complaints related to SARs.

Those who fail to respond to SARs promptly, or at all, risk a fine or reprimand.

In the EU, the European Data Protection Board (EDPB) has adopted a final version of its guidelines on data subject rights – Rights of access. These guidelines provide more detail on the scope of the right of access, how it is implemented in different scenarios, the format of a SAR and information a controller should supply when responding to a data subject.

So what?

The EDPB guidelines are not binding in the UK, but may provide helpful guidance, particularly on issues not covered by ICO guidance.

Some large organisations find the process of responding to SARs onerous, but the Data Protection and Digital Information (No.2) Bill, which is currently going through Parliament, contains changes to the rules on when an organisation can refuse a SAR or charge a reasonable fee for responding.

The Bill changes the threshold for refusing or charging a reasonable fee for a SAR from "manifestly unfounded or excessive" to "vexatious or excessive" and gives examples of requests that may be vexatious: those that are intended to cause distress, are not made in good faith, or are an abuse of process. These changes are intended to give organisations more flexibility, reducing the burden on businesses.